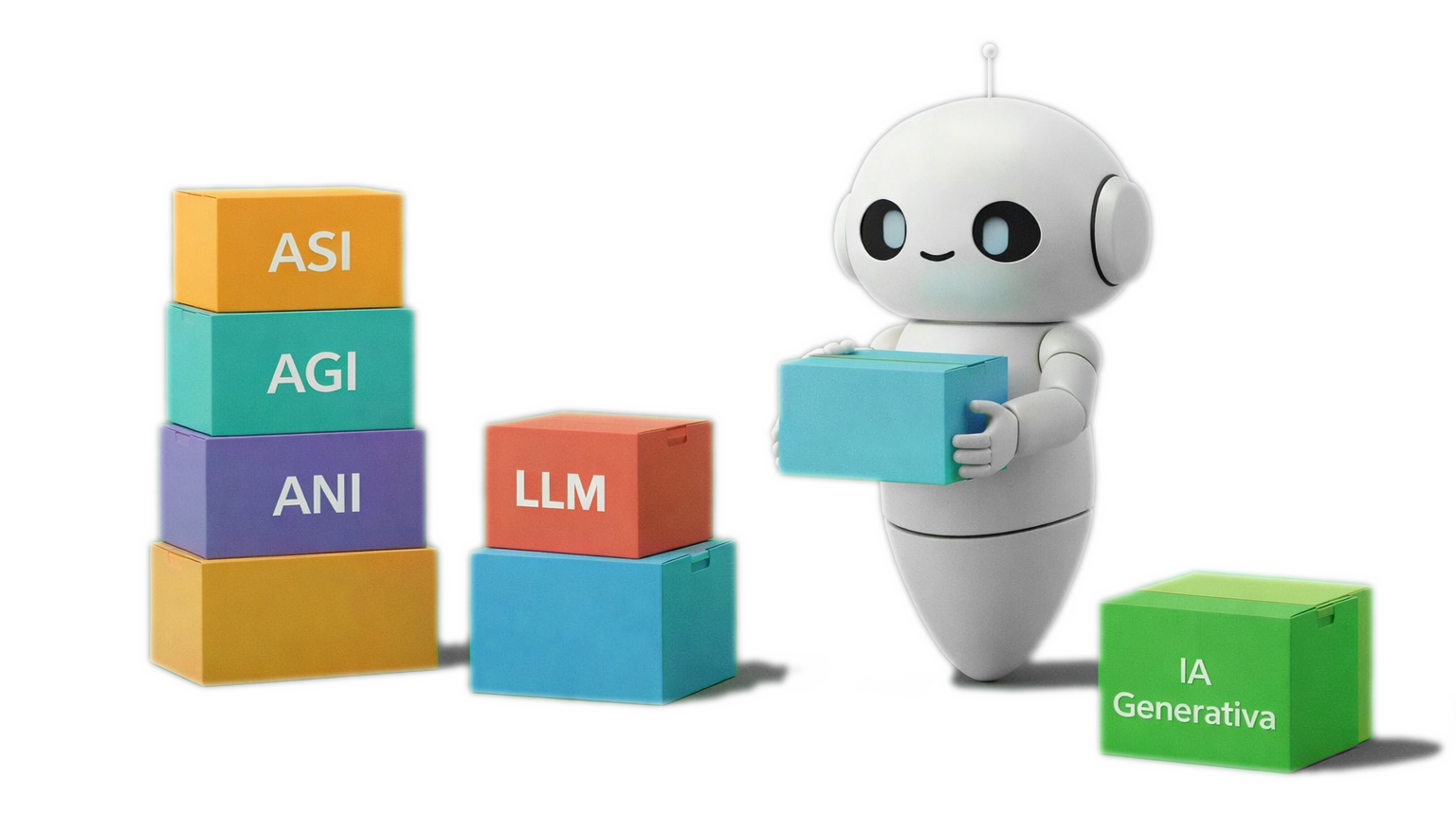

Types of AI

There are several ways to classify the different types of artificial intelligence. Let’s look at some of them:

BY CAPABILITY

Weak AI or Narrow AI (ANI) is designed to perform a specific task. It does not understand the world, nor can it act outside the function for which it was created. All current systems, such as virtual assistants or recommendation systems, belong to this category.

Strong AI or Artificial General Intelligence (AGI) is a theoretical type of AI that would have the ability to reason, learn, and adapt in a way similar to a human being, allowing it to deal with new situations. For now, it does not exist in reality and remains a research goal in the short or medium term for organizations such as OpenAI or DeepMind.

Superintelligent AI (ASI) is also a theoretical idea: it would be an AI that surpasses human intelligence in every aspect, not only in some.

BY FUNCTIONING

Reactive machines: These are the simplest ones. They have no memory; they only react. A good example would be a basic chess program that does not remember previous games.

Limited memory AI: This is the most common today. It can use past data.

Theory of mind AI (does not yet exist): It would be capable of understanding emotions, intentions, and thoughts as humans do.

Self-aware AI (does not exist): It would be conscious of itself and would have an identity like a person.

BY HOW IT IS BUILT

Symbolic AI: Uses rules such as: “IF this happens → THEN do this”.

Machine Learning: The AI learns from examples. For instance, it can recognize a cat after being trained with thousands of photos.

Deep Learning: This is an advanced form based on neural networks that attempt to imitate the brain. Through complex processes it can generate images, recognize speech, or translate languages.

Generative AI: This is the most well-known type. It creates new things: texts, images, or music. Examples include ChatGPT or DALL·E.

BY TYPE OF MODEL

LLM stands for Large Language Model. These are generative AI models trained using deep learning. OpenAI GPT, Gemini, or Claude are examples of LLMs. They work with text and learn grammar, meaning, and relationships between words, which allows them to write, summarize, translate, or explain.

Reasoning models are an evolution of LLMs optimized to reason step by step, solve complex problems, and think before responding. They can solve mathematical problems, program, and make logical deductions.

A multimodal model is an artificial intelligence system that can receive, understand, and generate information in different formats, such as text and images at the same time. It not only “reads” words but can also “see” images or “hear” sounds. Gemini, Google’s AI, unlike ChatGPT, was born in December 2023 as a native multimodal AI, meaning it was trained from the beginning with text, images, and other types of data.

A diffusion model is a type of generative AI that creates new images by first learning how to destroy them and then reconstruct them. These models are trained in two opposite phases. In the first phase, researchers take an image, for example a cat, and gradually add noise until the original image disappears. In the second phase, the AI learns to reverse the process: removing the noise until the image is reconstructed. Repeating this training over time produces the “magic”, and the AI learns to create images that have never existed before from pure noise. Two examples of this type of AI are Stable Diffusion, developed by Stability AI, and the image generator DALL·E by OpenAI, both released in 2022.

Vision models: These are designed to analyze and interpret images or videos. They detect patterns, identify shapes, classify objects, or recognize faces. They can recognize faces, detect diseases in X-rays, identify objects in a street scene, read license plates, etc. For example, the iPhone’s Face ID system uses vision models to recognize a face, while tools such as Google Photos can group photos by people, recognize places, or search for elements based on a text query.

Recommendation models are designed to predict what you might like or be interested in by detecting patterns in your previous decisions. They analyze what you watch, what you buy, what you listen to, how long you look at something, and compare it with millions of similar users. They are used by companies such as Netflix, Spotify, or TikTok to decide what to show us.

Reinforcement models learn through trial and error. They try, make mistakes, adjust, and try again. They receive rewards when they do something correctly. Over time, they learn the best strategy. The most famous example is

AlphaGo, developed by Google DeepMind under the direction of Demis Hassabis. In 2016 it defeated human champions of the game Go. It learned by playing millions of games against itself. Self-driving cars also improve their driving using reinforcement techniques.